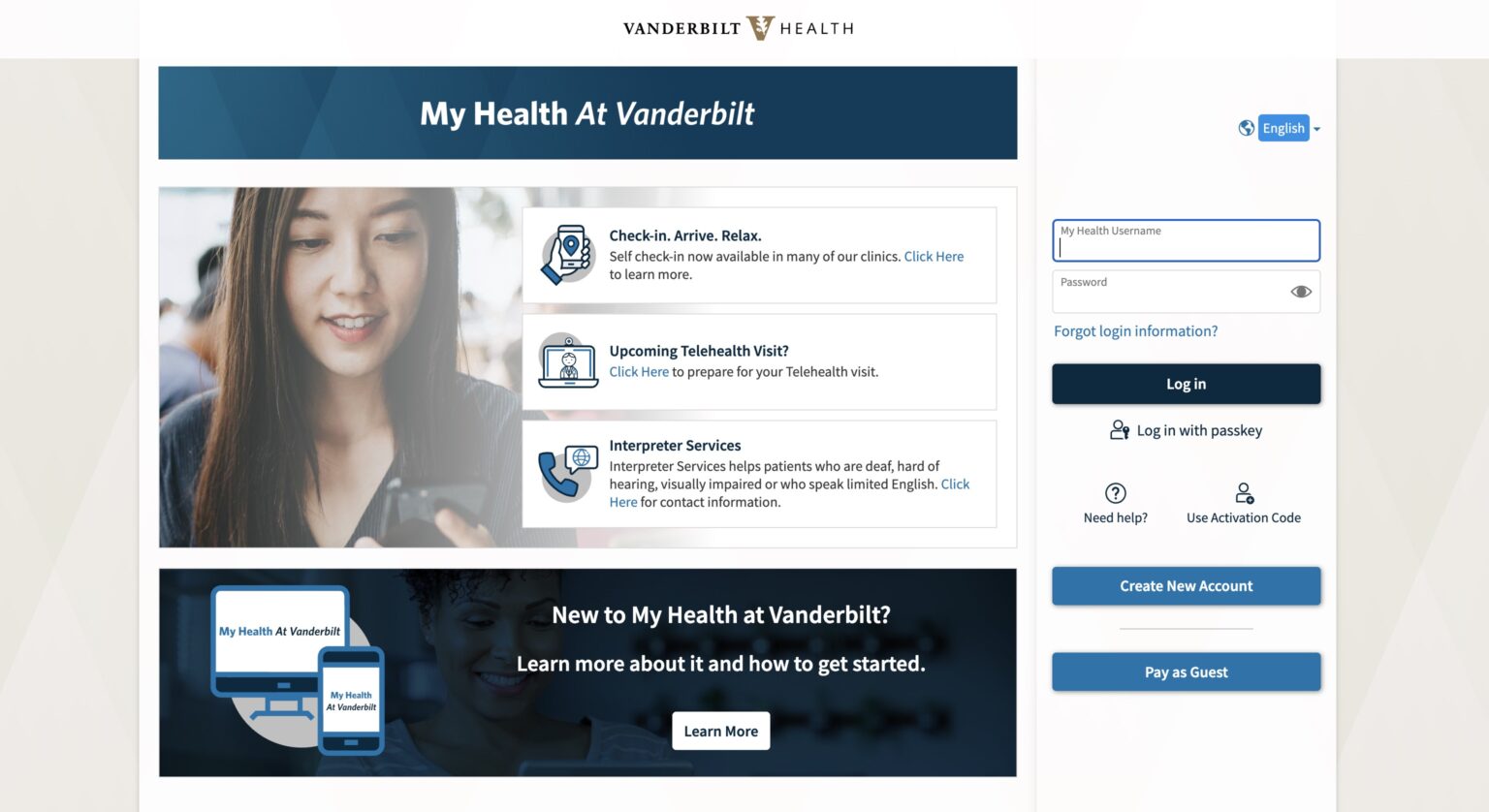

My Health at Vanderbilt (screenshot)

My Health at Vanderbilt (screenshot)

A new artificial intelligence (AI)-powered virtual assistant is being introduced in My Health at Vanderbilt, the health system’s patient portal, helping patients craft clearer, more complete messages to their care teams and aiming to spare both patients and clinicians the back-and-forth that can delay answers.

The tool, which began a phased implementation April 1 when it went live at Primary Care One Hundred Oaks, will be the first patient-facing AI deployed at scale at Vanderbilt Health. When a patient selects “medical question” in the portal, they can opt to use the assistant, which asks a few brief, conversational follow-up questions tailored to the concern at hand — a new symptom, a postoperative update, a question about a medication — and then drafts a more detailed message for the patient to review. Patients keep full control: They can decline further questions at any point, edit the draft freely, or send their original wording unchanged.

“When patients message us, we want to give a thorough, accurate answer the first time,” said infectious diseases specialist Patty Wright, MD, Chief Medical Officer for Adult Ambulatory Clinics. “Too often that requires details we have to circle back for, which leaves the patient waiting another day. This tool helps surface those details up front.”

The assistant is built on a large language model (LLM) from San Francisco-based OpenAI, accessed through a patient privacy-compliant Microsoft Azure cloud computing environment Vanderbilt Health already uses for clinical applications; none of the patient information is retained by the LLM provider.

What makes the tool a Vanderbilt Health product, rather than a generic chatbot, is the layer of institutional knowledge supplied with every interaction. Behind the scenes, each patient question is paired with an extensive set of VH triage protocols and care-routing instructions, along with nationally recognized nursing triage guidelines — an LLM technique known as retrieval-augmented generation. The model draws on that context to ask the kinds of follow-up questions a Vanderbilt Health nurse or access agent would ask, while staying within firm guardrails: It does not offer medical advice, and it preserves plain-language wording rather than substituting clinical jargon.

“This is the kind of work Vanderbilt is uniquely positioned to do — taking a research idea, validating it carefully, and building it into the workflow our patients actually use,” said Adam Wright, PhD, professor of Biomedical Informatics and Medicine, director of the Vanderbilt Clinical Informatics Center, and holder of the DBMI Directorship in Clinical Informatics. The project grew out of a 2024 study published in the Journal of the American Medical Informatics Association, in which Wright, Siru Liu, PhD, and colleagues showed that AI-generated follow-up questions could match clinician-written ones for clarity and concision and exceed them for utility.

Translating that research into a live clinical tool required collaboration between the Department of Biomedical Informatics and HealthIT, along with review by legal, compliance, quality and safety teams.

“We were intentional from the start about measuring how this performs in the real world,” said general surgeon Chetan Aher, MD, Associate Chief Medical Officer for Adult Ambulatory Clinics. “We’re tracking how patients use the tool and whether we’re shortening the time it takes them to get an answer. That data will guide every refinement.”

The rollout will proceed in stages over the coming months, expanding gradually to additional clinics. Eventually the assistant will become the default messaging experience, though patients will remain free to send their messages exactly as they wrote them.

“Bringing AI into a patient-facing tool demands extraordinary care, and our team has built this with the right safeguards in place,” said endocrinologist and clinical informaticist Dara Mize, MD, Chief Medical Information Officer at VH. “Grounding a powerful general model in Vanderbilt’s own clinical knowledge is an approach we expect to apply to a range of future applications.”

Major contributors to the project include Chetan Aher, Dan Albert, Babatunde Carew, Brian Carlson, Jared Cobb, Julian Genkins, Christina Grimes, Andrew Guide, Colin Heeke, Allyson Hobbie, Sean Huang, Sunil Kripalani, Yaa Kumah-Crystal, Joey LeGrand, Siru Liu, Sarah Marbach, Craig McQuistion, Jenessa Miller, Amanda Mixon, Dara Mize, Neal Patel, Josh Peterson, Neeraja Peterson, Judy Ralph, Danny Roberts, Chris Rockwell, Kelsey Rodriguez, Susannah Rose, Trent Rosenbloom, Peter Samuel, Peter Shave, Jason Slagle, Sarah Stern, Diane Steward, Jeremy Turkett, Mike VanMaanen, Nicole Werner, Aileen Wright, Patty Wright, and Adam Wright.

Trent Rosenbloom, MD, MPH, professor of Biomedical Informatics, Medicine, Pediatrics and Nursing, is the director of My Health at Vanderbilt.